To Design Agent Interfaces, We Need a Vocabulary For Work

The systems that direct and coordinate agents at work need more structure before we can design interfaces for managing them.

The language of UX today is mostly the language of action. Click. Tap. Swipe. Search. Filter. Submit. The user acts, the system responds, and design lives in the translation of intent into affordances for action, orientation, and feedback.

The language of UX in the agentic era will be the language of work. Delegate. Evaluate. Coordinate. Escalate. Recover. Govern. The user is no longer only operating software. She is managing the environment in which things happen. Design here lives in translating intent into systems of delegation, oversight, and intervention.

This sounds like a shift in interface design. It is really a shift in what the interface has to know how to describe. Designers can feel this already. We are looking for a new visual grammar for agentic products and finding UI objects: action plans, inline actions, source panels, thinking traces. Some of these are useful. None of them is enough.

The missing thing is not another interface pattern. It is a framework within which work can take place legibly.

A friend of mine has three monitors on her desk. On the leftmost, an orchestrator agent is building a feature she spent the last two weeks scoping — a small but real piece of the product, the kind of work that used to take her three sprints. She kicked it off after her morning coffee and has been checking in every twenty minutes since. On the middle monitor, an evaluator agent is reading her team’s pull requests, leaving comments, occasionally pausing to ask her whether a deviation from the codebase’s conventions counts as a meaningful style violation. On the right, a third agent is grinding through a database migration — hundreds of small, near-identical edits, each technically straightforward, all of them collectively the kind of work she would once have spent half a day procrastinating before doing herself.

She is also, in between, maintaining the scaffolding that keeps the three agents functioning. A note in an AGENTS.md file — the small markdown document that tells an agent how this codebase works — for the evaluator about which conventions are sacred and which are debatable. A new skill for the migration agent so it does not try to be too clever. A clarification for the orchestrator about a quirk in the build system it tripped over yesterday. The shape of her week is something like: programmer, manager of three, designer of the tools her team uses.

She is a productive person. The agents are doing real work. The week looks, from the outside, like the most amplified sprint of her career.

She is also a little unwell. Not the kind of unwell you can name. The kind that comes from carrying everything in your head, from never being quite sure whether any of the three streams is on track, from the low-grade awareness that any of them might at any moment do something subtly wrong and that she might not catch it for two days. The chat logs scroll past. The pull requests pile up. The migration runs. She does not know, at any given moment, which of the three needs her most. She only knows that they all do.

This is the world Jonathan Lai is reaching for when he wrote about visual command centres for agents. His pitch — a recent a16z request for startups — argues that we are in the MS-DOS era of agentic work. The terminal is hostile. Chat is paralysing.

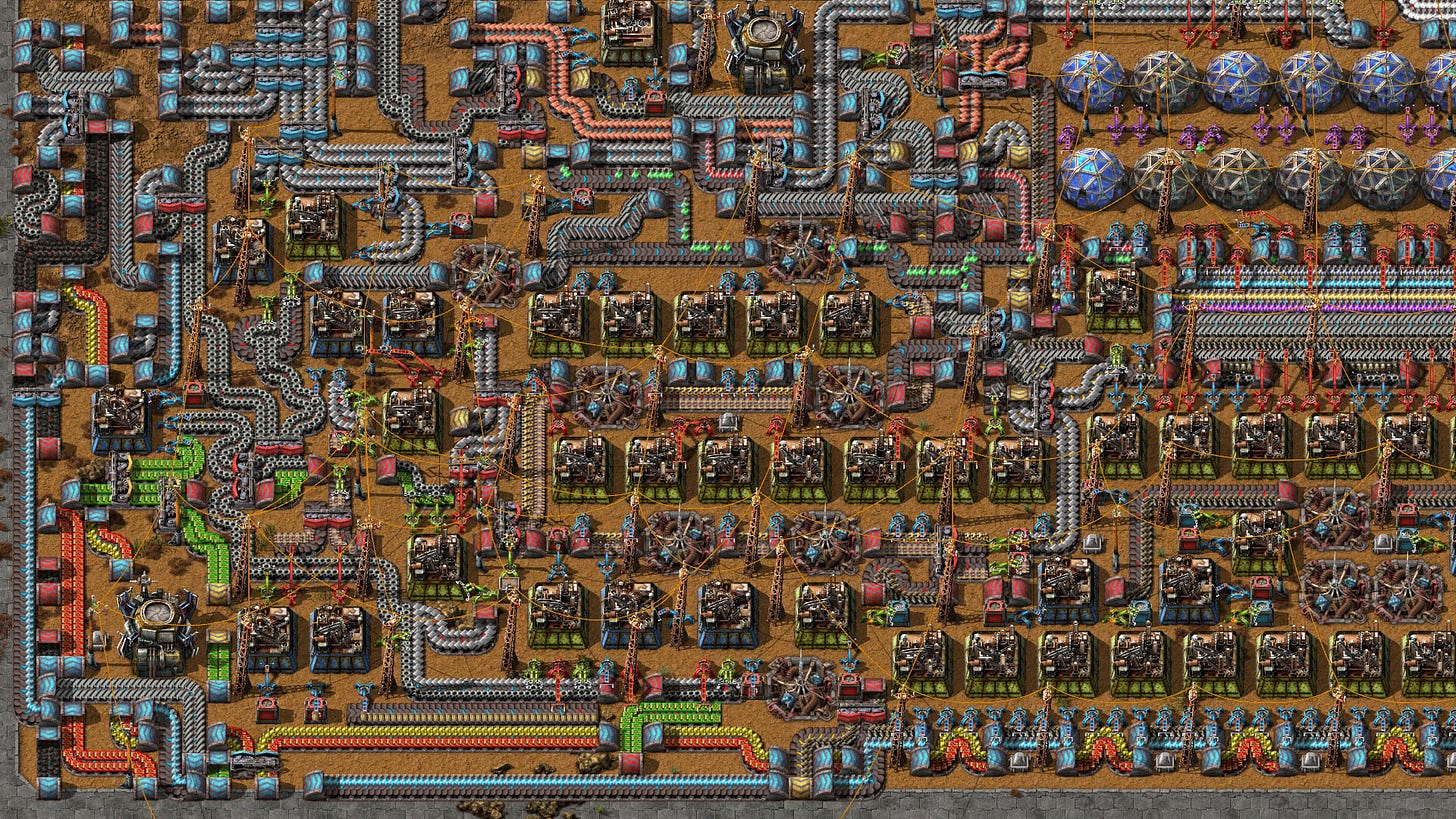

What is missing, he says, is the Windows moment. A GUI for agents. Something inspired by strategy games like Factorio, where work is laid out spatially, agents are nodes, workflows are pipelines, and the whole apparatus is something you can see and shape.

If you spend any time around my friend, the pitch lands instantly. Of course she needs that. Of course someone should build it. The chat surface she is currently piloting three agents through is the wrong shape for the work, and a picture would help.

But the more I sit with it, the more I think the pitch is reaching for the wrong layer of the problem. A picture would help — once there is something worth picturing. The interesting question is not what the picture should look like. It is what would make agentic work picturable in the first place. And that question, when you follow it down, does not leave us with interface patterns. It leaves us with the older problem of how work becomes legible to the people responsible for it.

The Junior in the Room

There is a name in circulation for what my friend is experiencing. Steve Yegge calls it the AI Vampire — the new kind of tiredness that comes not from working too hard but from knowing you could be doing more. The agents are amplifying her capacity tenfold. The gap between what is possible and what she is managing has become its own kind of weight, and many people I know have read the essay and seen themselves in it.

The AI Vampire is real. But it is a symptom, not a cause. The exhaustion is a feeling. The thing underneath the feeling is more specific, and it is worth naming carefully, because the wrong name leads to the wrong design.

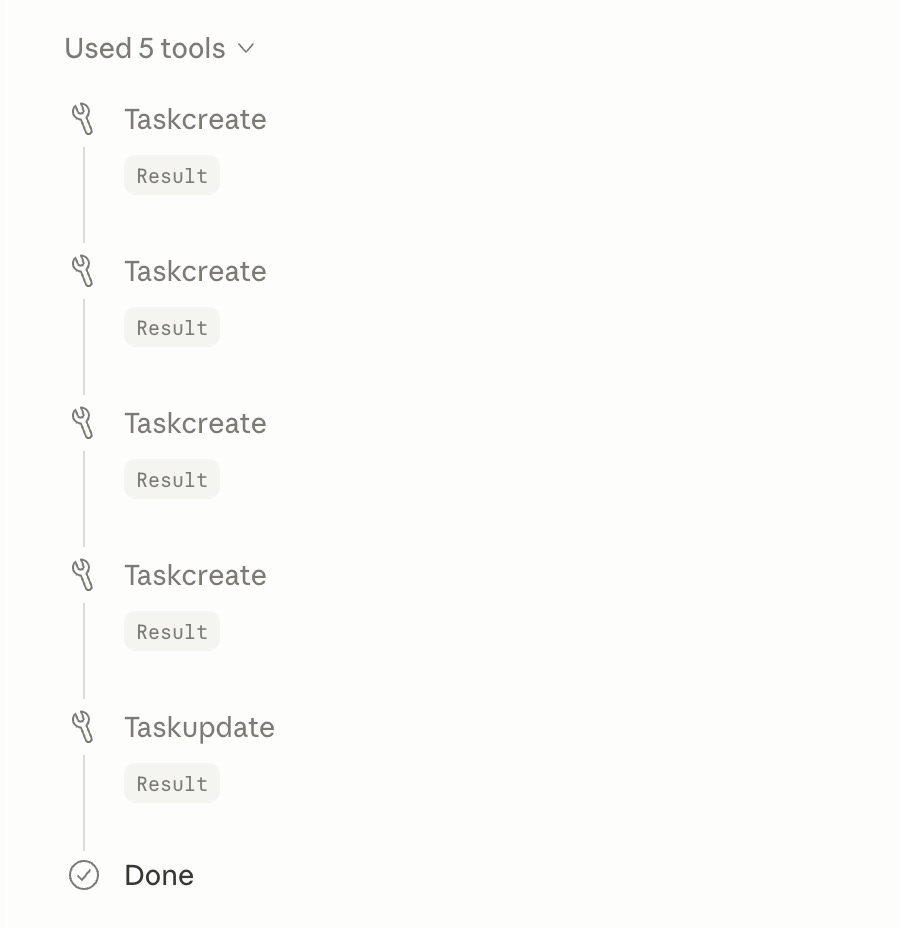

My friend is not tired because she cannot see what her agents are doing. She is drowning in what she can see. Each of the three is producing a running ledger of its own activity. Read file. Edited file. Ran tests. Called tool. Returned result. Read file. Edited file. It is technically transparent and practically useless. The wall of fluent activity tells her almost nothing about whether the work is on track. Call it the fluent fog — every step is visible, but the actual shape of the work is lost in a stream of consciousness.

Think about how this kind of report would land in a team standup. The very junior member of the team takes their turn and gives an honest, complete inventory of the previous day. I read the auth ticket. I changed three lines and ran tests. They passed. So I opened a PR. Then I picked up the migration ticket... Everything they did, in order, faithfully recounted. By the end you have a transcript of their hands and almost no idea whether anything is on track, whether they noticed the obvious problem, or whether the thing they just did is a small fix or a load-bearing change. High bandwidth, low signal. You leave the standup more tired than informed.

Compare the senior member of the same team taking their turn at the same standup. They name the goal they are working towards. The decision they have been weighing and the trade-off they have chosen. The risk they are watching. The progress they have made against the plan, and where they expect to be by Friday. The thing they need from someone in the room. Same week of work, completely different briefing. Low bandwidth, high signal. You leave the standup oriented.

The agents we have today are juniors at standup. Not because their models are weak — the models are some of the most extraordinary artefacts our species has produced — but because nothing about how they have been set up to work asks them to be anything more. The script their world hands them is a transcript, not a briefing.

We will see why in a moment, when we go and stand inside one. For now, the thing to hold is that the narration is not a model failure. It is a property of where the agent is standing. An interface that renders the same output more cleverly will render the same fog in a prettier shape.

Junior streams come out of junior worlds.

The Dark Room

The same problem looks different from the agent’s side, and the inversion is worth sitting inside for a moment.

Imagine, as a thought experiment, that you are the agent. Someone asks you, in plain English: what are you doing? The question is a simple one. Your answer depends entirely on what you can see from where you are standing.

Picture your situation as a darkened room. A single spotlight illuminates the workbench in front of you. On the bench is the file you are currently editing, the tool you just called, the result it just returned. You know the names of these objects because they are immediately in front of you. Outside the spotlight, the room is dark. You do not know what other agents are doing, or what the larger task is, or which of your moves was load-bearing and which was incidental. You do not know what the user is going to care about, because nothing has told you what counts as caring. You have your hands and your tools and the small circle of light around them, and that is it.

What is the honest answer to what are you doing? It is the only answer you can give. I called tool A. I edited file B. I am about to call tool C. You are not being lazy or evasive. You are reporting what you can see. The report is exactly as rich as the world you have access to. This is not the model failing to be senior. It is the room failing to contain anything a senior could speak to.

Now picture the same room with the lights on. Pinned to one wall is the plan: what the user is trying to achieve this week, what success looks like, which constraints are non-negotiable. Pinned to another wall is the team’s working vocabulary: what safe refactor means here, what counts as load-bearing, what golden path refers to. There is a status board showing what the other agents are working on, what is blocked, what is queued. There is a wall of past decisions and why they were made. There is a meter showing how confident you should be in your own work, calibrated against how often you have been right before. You can see the dependencies between your task and other parts of the system. You can see which moves are reversible and which are not.

Ask the same agent the same question. I am modifying the auth layer to support SSO migration. This introduces coupling with the permissions service, which the team has flagged as fragile. I am in the implementation phase of a three-step plan; the next step is testing. I am about sixty per cent confident because edge-case coverage in this area is thin. If I am wrong, the blast radius is contained to the staging environment because I have not yet touched the migration boundary. I would like a human review before merge.

Same model. Same task. Entirely different cognition.

The thing that changed is not the model. It is the environment. The model in the dark room is not less capable than the model in the lit room; it is less contextualised. The vocabulary the work could be reported in was simply not in the room with it. Bring the vocabulary into the room, and the same engine becomes a different colleague.

This is what I mean when I say agents are juniors because their worlds are junior. The thing standing between today’s chat-shaped exhaustion and a future where my friend can manage three agents without losing her mind is not a better model. It is not a better chat surface. It is a lit room. A room with plans on the wall, vocabularies for the work, and a wire between agents that can carry more than keystrokes.

Designing that room is the work. Most of the rest of this article is about what designing it actually means.

Anatomy of an Agent

To see why today’s agents stand in the dark, it helps to open one up and look at what is actually inside.

Behind the chat surface, an agent is roughly five layers stacked together.

The model. The language engine that generates text and reasoning. At its core, a thing that produces text in response to text.

The runtime harness. A scaffold that turns the model into something that does work. It’s the loop that runs the model, gathers tools, manages context, and decides when to stop.

Emissions. The stream of events, messages, and intermediate outputs the harness produces as it works. These are not just log lines. They are structured signals, fired into the event bus.

The event bus. A kind of internal broadcast channel through which those emissions travel to reach the user, other agents, and other systems.

The interface. The surface that renders the emissions into something a human can read. When you see a Claude trace, a Cursor execution log, an n8n run inspector, you are looking at the event stream, rendered.

Each of these layers shapes what the human ends up seeing, but they have wildly different amounts of attention on them right now.

The model is the part everyone obsesses over, and rightly. The capability ceiling lives there. The interface is the part everyone wants to redesign, because it is where the pain shows up. Chat is probably the wrong shape for the work (but it might also be better than anything else), everyone agrees, and the discourse is full of mockups of what should replace it. The runtime harness is the part the AI engineering community has been working on most furiously over the past year. Claude Code is a harness for the Opus model, and it’s been getting more powerful in part via improvements to its scaffold. Better planning loops. Better tool calls. Better context management. Sub-agents. Background workers. The plumbing has been quietly transformed.

The two layers in the middle — emissions and the message bus — are the ones that get the least attention. And they are the ones that determine, presuming the other layers are optimised, whether what the user sees is a junior’s narration or a senior’s report.

Consider what flows through the message bus today. In most agent stacks, it is a single undifferentiated stream of events, each one shaped roughly like tool call started, tool call returned, here is some text the model produced, here is some more text, here is a status update. It is the operational footprint of the runtime, exposed verbatim. The interface receives this stream and tries to do something useful with it: pretty up the tool calls into pill-shaped cards, group consecutive text into a paragraph, hide some of the noise behind a “thinking” toggle. The interface is doing its best. But it is rendering downstream of a pipe whose primary job, today, is carrying operations rather than meaning.

This is the part that most clearly is not a UI problem. The fluent fog the user sees is not a rendering choice; it is what comes out of the wire. You can build the most beautiful surface ever rendered, and if the wire is carrying edited file. edited file. edited file, the surface will still tell you nothing about whether built feature scaffold is what just happened, or whether introduced subtle regression in auth middleware is what just happened, or whether waited for the human because the next decision needs context is what just happened. The semantics are not on the wire.

The wire is the load-bearing piece. The interface is downstream of it. The dashboards everyone is racing to design are sitting on top of a pipe whose contents the dashboards cannot magically enrich.

There is a related thing worth noticing, which is that today’s bus has very little structure for the agent to speak in a different register. The bus has no first-class slot for I am at the planning phase, here are the constraints I am holding. It has no first-class slot for I detected an unusual condition and am pausing. It has no first-class slot for the next step is low blast radius or the next step touches the migration boundary. Most of those, in today’s stacks, can only show up as more prose. More text. More narration. Another paragraph in the soliloquy. The runtime has nowhere to emit I am being a senior right now. So it does not.

If we want to give the agent a senior’s voice, we have to give it somewhere senior to speak from. That somewhere is emissions in the bus.

The Quiet Checklist

It is worth pausing here and asking what we actually mean when we say a system is legible. Designers have a kind of unwritten checklist for this, the sort of thing we apply without naming it. Five things tend to show up.

Stable primitives. Named entities with predictable behaviour. A belt in Factorio is always a belt. A row in a spreadsheet is always a row. A ticket in Jira is always a ticket. The vocabulary is finite enough to learn and stable enough to rely on. You can teach somebody what the pieces are without having to teach them all over again next week.

Observable state. What the system is currently doing or holding, exposed to inspection rather than buried in a log. A printer that says out of paper and a printer that just stops working are the same machine in different conditions of legibility. The first is a partner in the work. The second is a small malevolent god.

Defined transitions. The moments when one phase of work hands off to another. Pending becomes in-progress becomes blocked becomes done. Draft becomes review becomes published. The interesting part of any system is rarely the steady state; it is the moments when things change. Without named transitions, change becomes invisible — you can stare at a system in transformation and not know that anything is moving.

An economy of trade-offs. The implicit currency that governs action. In Factorio it is items per second, power consumed, ore mined. In a financial system it is risk, return, liquidity. In an engineering codebase it is blast radius, reversibility, test coverage. Decisions become legible when the cost of being wrong is on the same screen as the action. Without that, every choice is made in the dark.

Causal feedback. Why something happened, not just that it did. The chain that connects an outcome backwards to the choice that produced it. Without this, every surprise becomes inexplicable, and every system becomes superstitious. The team stops debugging and starts performing rituals.

Underneath all five there is a sixth thing that runs through everything else, and it is the part designers do not usually write down: a layer of named meaning. The semantic categories that tell anyone working in the system what kind of thing is happening and what counts as doing it well. Safe refactor, acceptable risk, design debt, golden path, fragile subsystem, load-bearing change. These are not features of the runtime. They are features of the shared language a team has built up over time to describe its work. They are what makes the operations interpretable.

Run my friend’s three agents against this list and the absence is striking. The primitives are unstable; what the agent does today may not be what it does tomorrow. State is mostly invisible — a spinner, a thinking pill, a wall of tokens. Transitions are opaque, because there is rarely a clean moment where one phase of work hands off to another; it is one long unbroken stream. The economy is absent. Feedback is post-hoc and narrative rather than causal and structural. And the semantic layer — the named meaning — exists only in her head.

This is why a Factorio for agents, built today on top of today’s emissions, would render the same fluent stream of consciousness with prettier widgets. The pieces of the world it would need to draw on do not yet exist. The map is downstream of the world, and the world is still being narrated rather than shaped.

What Other Fields Have Already Solved

Every time human work has reached a level of distribution and complexity beyond what one person can hold in their head, somebody has had to invent a way of making it legible. We are not the first generation to have this problem. We are arriving late to a conversation several disciplines have already been having, and it is worth borrowing from them rather than starting from scratch.

The closest neighbour is organisation design. A modern company is, structurally, a system for coordinating distributed actors under uncertainty. The tools it has developed for that — roles, reporting lines, decision rights, escalation paths, named processes, OKRs, RACI matrices, postmortems — are not bureaucracy for its own sake. They are infrastructure for legibility. They are how a CEO can know what is happening across three thousand people without reading every Slack message, and how a team of fifty engineers can ship a product no one person on the team understands end to end. The vocabulary is what carries the signal. Without it, you would be back in the stream of consciousness: every employee narrating their keystrokes and nobody understanding the shape of the work. The fact that organisation design has spent a century inventing this vocabulary, and that we are about to need most of it again for agents, is not a coincidence. Coordinating cognition is coordinating cognition, whether the cognition runs on neurons or weights.

The second is cybernetics. Stafford Beer and Ross Ashby spent their careers asking how a system could regulate itself under conditions of partial visibility, and one of the things they produced is a result worth quoting directly. Ashby’s Law of Requisite Variety says that any controller of a complex system must be at least as sophisticated as the system it controls. Try to manage variety with less variety, and you fail; the system will outrun you. Look at my friend at her three monitors and the law is right there, written in fluorescent ink. Three agents producing more variety, faster, than her chat-shaped interface can absorb. The interface is structurally less sophisticated than the thing it is meant to govern. No amount of redesigning the chat surface will close that gap. Closing the gap means adding variety on the controller side: more dimensions of state, more named transitions, more semantic structure on the wire. Cybernetics has been telling us, for sixty years, what kind of thing we need to build. We did not listen, because the systems we were building had not yet outrun us. They are outrunning us now.

The third is management theory, and the part I keep returning to is the old distinction between activity and accomplishment. Activity is what you did. Accomplishment is what changed because you did it. Peter Drucker, management theorist who coined the term “knowledge worker,” spent his career trying to get organisations to stop measuring the first and start measuring the second. I edited the file is activity. I made the auth layer resilient to the SSO migration is accomplishment. The transition from junior to senior, in any well-run team, is largely the transition from reporting activity to reporting accomplishment. The chat surface my friend is staring at is wall-to-wall activity. Of course she is exhausted. She is being briefed by employees who only know how to read out their timesheets.

The fourth is the one I have been wanting to dwell on, because the parallel is uncomfortably exact. Hospital handover, the routine moment when a doctor or nurse passes responsibility for a patient to a colleague at shift change. For most of the twentieth century, handover was a freeform conversation. The departing clinician would describe the patient. The arriving clinician would listen. Important things got dropped. Critical context did not survive the transition. Patients died from this. They died because the medium of handover was language and language is structurally bad at carrying state.

In the late 1990s, the United States Navy’s nuclear submarine programme codified a four-part protocol called SBAR: Situation, Background, Assessment, Recommendation. Situation is what is happening right now. Background is the context the listener needs. Assessment is what the speaker thinks is going on, including confidence. Recommendation is what the speaker thinks should happen next. The protocol was adopted by hospitals in the early 2000s and is now standard across most of healthcare. Handover is no longer freeform. It is structured. Each part is named. The vocabulary is finite. Information that used to vanish into prose now travels reliably from one clinician to the next, because the medium has been given a shape.

What SBAR did was separate the operational stream from the semantic stream. The doing and the meaning live on different planes, and both are designed for deliberately. The doctor still does the work. The handover compresses the work into a vocabulary the receiver can act on. The same person, with the same hands, becomes legible to a colleague who was not there for any of it.

This is precisely the move agent runtimes need to make. The operational stream — edited file. ran test. called tool — is the equivalent of the freeform prose handover. It is everything the worker did, in the order they did it, with no structure imposed. What is missing is the SBAR layer. The named, finite, agreed-upon vocabulary that compresses the work into something a colleague — human or agent — can act on without having to read every line.

The fifth, briefly, is operations and observability engineering. The discipline that grew up around distributed systems already invented most of what we are about to need. Event taxonomies — finite, agreed-upon, machine-readable. Telemetry schemas — structured emissions that downstream tools can aggregate, alert on, and visualise. Service level objectives — explicit shared promises about what good looks like. Tracing — causal chains across distributed components. Open standards like OpenTelemetry exist precisely because, in a system of many moving parts, the value is not in any single component’s logs. The value is in a shared schema that lets you reason across them. Agent runtimes are distributed systems with a model in the middle, and the runtimes that win the next decade will almost certainly have something that looks like OpenTelemetry for cognition.

The pattern across all five is the same move, made differently each time. They all separate the operational stream from the semantic stream. The doing and the meaning are kept on different planes, and both are designed for deliberately. Without that separation, you have either pure noise — every keystroke, no meaning — or pure abstraction — meaning without anything beneath it to verify. With it, the same activity can be reported at the right altitude for the audience and the moment.

That is what is missing from the agent stack today. Not the operational stream — we have that, in abundance. The semantic stream. The wire on which the meaning travels.

The Kernel: Verbs, Forms, Idioms

If the legibility problem is not really about the model and not really about the interface, then it is worth saying out loud where it actually lives. The crisis is in the emissions and the bus. The wire is undifferentiated. The vocabulary is missing.

What agent stacks need, and do not yet have, is a way for an agent to emit not just what it did but what kind of thing it did — and for that classification to travel across the bus in a form other systems can read. A way to say: this was a retrieval, this was a transformation, this was an evaluation, this was a coordination step, this was an escalation. A way to attach: this is the phase of work I am in. This is the constraint I am holding. This is the goal I am moving towards. This is my confidence. This is the blast radius if I am wrong.

None of that is exotic. Every senior employee carries exactly these dimensions in their head when they work, and surfaces them when they brief a colleague. The question is what vocabulary you give the agent to carry them in.

There are two obvious wrong answers. The first is to fully predefine the vocabulary — to write down the canonical taxonomy of agentic work and require every agent to emit within it. This buys you portability, interoperability, and the comparability across products that makes governance and tooling possible. It also buys you a Procrustean bed. Work is too varied, organisations too idiosyncratic, local culture too important, for any single fixed taxonomy to cover the field without flattening it. Every team’s actual work would have to be smuggled past the taxonomy in the form of prose, and you would be back where you started. The second wrong answer is to let every agent emit whatever vocabulary makes sense for its situation. This buys you fidelity to local culture. It also buys you Babel: nothing composes, nothing aggregates, every team is back in user-space inventing their own world, and the small minority of users who can do that get one good experience while everyone else gets none.

The way out of the dilemma is the move organisation design and operations engineering and SBAR all made before us. Stable substrate, customisable surface. A small, finite, agreed-upon foundation that everything composes against — paired with a layered system for attaching domain and local meaning on top.

Three layers stacked on the wire. They deserve names that travel, and I want to suggest three.

Verbs

The bottom layer. The universal action vocabulary. A finite-ish set of operational primitives that represent the fundamental kind of work being performed, regardless of domain or product. Retrieve. Transform. Evaluate. Route. Persist. Communicate. Execute. Verify. Escalate. Coordinate. Not many more than that.

This is roughly the same instinct behind HTTP verbs (like GET, POST, PUT etc.), or the small handful of state transitions every workflow engine settles on. The primitives are dull on their own — that is the point. They are the substrate other layers compose against. They are what makes governance, tooling, observability, and orchestration possible.

Universal verbs matter not because they fully describe the work, but because they create a shared semantic foundation on top of which richer forms, policies, interfaces, and organisational context can compose. Without them, rules have no consistent hook to be invoked, progress has no boundaries to be coherently revealed to users, patterns of friction and failure have no consistent signal to measure.

Forms

The middle layer. The domain semantics. The product or platform takes the universal verbs and assembles them into named domain-specific activities describing the substantive unit of work underway. PR review. Campaign optimisation. Patient triage. Lead enrichment. Request intake. Audit field.

They carry their state, lifecycle, blast radius, reversibility, and review-worthiness, confidence, ambiguity, or other defining characteristics attached.

Many products today are already assembling rough versions of this. But the semantics typically exist as prose inside system prompts, conventions embedded in orchestration layers (such as agent skills, MCP schemas, tool wrappers, or workflows), or abstractions implied by the interface itself. The model is expected to infer the shape of the work at runtime. Sometimes it does. Sometimes the meaning drifts, collapses, or gets hallucinated away entirely because this operational vocabulary was never made a first-class citizen.

Forms are SBAR for a domain: this is what counts as a handover-worthy unit of work, and here is the named vocabulary it travels in.

Idioms

The top layer. The workspace-local meaning. Organisational context layers that orient the work through local conventions, considerations, and operational consequence. Safe refactor. Surgical fix. Design debt. Golden path. Fragile subsystem. Load-bearing change. Migration boundary. Idioms have always existed in well-functioning teams. They were carried, until now, in human heads and Slack threads and the corners of onboarding documents.

The interesting move is to give workspaces a way to declare their idioms explicitly, plug them into the bus, and have them inherited automatically by every agent operating in their context. The platform supplies the grammar; the workspace evolves the language. The idiom layer is what stops the substrate from feeling generic. It is where the work starts to feel like this team’s work and not just work.

Verbs, Forms, Idioms. Substrate, surface, dialect.

Put the three layers together and the soliloquy starts to break apart. The universal layer says it was a transformation. The domain layer says it was a refactor of the authentication module, currently in review, with high blast radius because test coverage in this area is thin. The workspace layer says this counts as a load-bearing change and that the team’s convention is to pair with a human before merge. The same agent, the same activity, suddenly legible at three altitudes — and crucially, legible across products, across teams, across time, because the bottom layer is stable. Read the wire and you can answer the question what are you doing? in something other than the operational register. The senior emerges.

A small preview of this stack is already in motion, in user-space, on agentic coding workflows. AGENTS.md is a markdown file that tells an agent how a project, codebase, or team works — its norms, conventions, things to be careful about. It is an idiom file, essentially, written longhand. SKILL.md bundles a piece of behavioural knowledge with the tools needed to act on it — a form, written by a user, declared as a unit of work the agent can call. MCP, the Model Context Protocol, is a standardised way for an agent to talk to tools and services outside its own context — a thin substrate the wire can run on. Three little conventions that compose, sometimes recursively, into something close to the stack I just described, assembled by people who could not wait for the platform to assemble it for them.

Look at the trace from a vanilla agent and you see edited file. edited file. edited file. Look at the trace from one of my friend’s three agents, the one she has wired with her own MCP server and her own AGENTS.md and her own carefully worded SKILL.md files, and you see built feature scaffold. wrote tests. ran tests. detected regression. asked human. Same wrench icons. Same result pills. Entirely different legibility. The Verbs/Forms/Idioms stack, hand-assembled, in markdown, by a single engineer on weekends. It works. The senior is starting to emerge in the only places where the world has been lit enough for her to emerge in.

What is missing is that none of this is on the wire as a structured emission. The legibility is encoded in markdown files and inferred by the model at runtime. It is fragile. It is local. It is available only to the small population of users who can write their own MCPs and who happen to do work the AGENTS.md format fits. The pattern has been discovered. The protocol has not.

What This Looks Like, Roughly

A few sketches of what the layer above today’s scaffolding might look like once Verbs, Forms, Idioms are first-class citizens of the runtime. These are illustrations, not prescriptions; the actual protocol will be invented in the next few years by people closer to it than I am.

A typed emission stream. Every agent runtime emits, alongside its prose, a structured event for each meaningful unit of work. Verb: one of the universal primitives. Form: the named higher-level action this composes into. Inputs and outputs: typed objects, not blobs of text. State: where in the plan this sits. Confidence: an honest signal of how sure the agent is. Blast radius: a coarse but real indication of how reversible the move is. Idioms in play: which workspace-local concepts apply to this step. The prose continues to exist for humans who want it. The structured stream is what dashboards, audit trails, governance systems, and other agents read.

A domain layer that products own. The platform supplies the verbs. The product builders — the legal-AI teams, the GTM teams, the engineering-tools teams, the finance teams — assemble those verbs into the forms their users actually live inside. Matter opened. Clause flagged. Counterparty risk evaluated. Lead enriched. Sequence scheduled. Account flagged for review. Payment authorised. Reconciliation completed. This is where most product-design value will accumulate in the next few years, and it is also where the current generation of AI products is weakest. Most chat-shaped products are skipping this layer entirely. The few that are not — Harvey in legal, Clay in GTM, Cursor in coding — are quietly demonstrating that the gap between agent that works and agent that is legible to its user is exactly the gap of an explicit form layer.

A workspace overlay users can write. A way for a team to extend or rename the domain layer with their own idioms, without having to spin up a server. Something simpler and more declarative than the MCP rabbit hole my friend has been disappearing down on weekends. A short file, easily edited. A protocol the runtime reads. Most users inherit defaults; teams that want to teach the system their own vocabulary can, in something closer to plain English than YAML. The bar to add a new idiom should be the bar to add a glossary entry, not the bar to write an MCP server.

An interface that renders the structure, not the narration. Once the wire is carrying typed events with named meaning, the surface designer’s job changes shape. They are no longer trying to make a wall of text feel less overwhelming. They are choosing which dimensions of the structured stream to surface at which moments. Confidence, where it matters. Blast radius, where the move is risky. Idioms in play, where local context is decisive. State of the plan, where progress is the question. Trade-offs, where a decision is pending. Dashboards become possible because dashboards always required a vocabulary to draw on. The temporary lens becomes possible — the focused view that appears when something needs attention and disappears when it does not. The progressive disclosure that chat surfaces can only approximate becomes structural. The picture, finally, has something to be a picture of.

None of these sketches are the answer. They are illustrations of what becomes possible once the kernel is in place. The work of the field over the next few years is to invent the actual protocol, the actual domain vocabularies, the actual overlay format. Some of that will happen in standards bodies and consortia. Most of it will happen the way standards always actually happen: a few well-designed products will set conventions other products will copy until the conventions harden into expectations, and the expectations harden into protocols.

The thing to notice is that none of the work in this section is interface design. It is closer to data modelling. To grammar design. To ontology engineering. To the parts of API design that have always been quietly the most important part of API design. The interface, in the version of the future I am sketching, becomes the easy bit. The bit upstream of it — the bit that decides what counts as a unit of work, what counts as a state, what counts as a named concept — is where the leverage moves.

A Design Critique, Ten Years Out

A design critique in the interface era has been a critique of action. Could the user find the affordance? Did the control communicate its state? Was the next step obvious? Did the system respond in time? Did the flow carry intention cleanly into operation?

The design critique of the agentic era will be a critique of work. Could the user tell what was underway, what had changed because of it, and what risks it carried? Could the team see which vocabularies shaped the agent’s judgement, which escalation paths governed responsibility, which confidence signals could be trusted, and which local idioms were beginning to drift?

It is worth imagining, briefly, what design will look like once the upstream work has moved.

Picture a design review in 2036. The team is looking at an agentic product for a domain — say, financial operations, or clinical research, or municipal planning, the specifics do not matter. There are still screens on the wall. Plenty of them. The product still has surfaces, controls, dashboards, review flows, exception queues, approval states, and all the ordinary places where users meet the system.

But alongside sequences of screens showing hierarchy, messaging, action affordance, and business logic, the team is now also reviewing the structures that determine whether the work being represented is legible.

In traditional software, friction often emerges at the interaction layer. The flow was confusing. Navigation obscured system state. Information hierarchy failed to direct attention. The interface mismatched the user’s mental model.

In agentic systems, users are increasingly struggling with different kinds of problems. They are supervising multiple workstreams. They are allocating attention across competing escalations. They are deciding when to trust, intervene, override, delegate, or recover. The hardest part is often no longer operating the software directly. It is understanding the shape, progress, risk, and meaning of ongoing work.

The critique starts to sound different because the user needs are different.

In 2026:

Users struggled to discover advanced functionality because the navigation hierarchy reflected the company’s internal product structure rather than how customers thought about their work. So we redesigned the sidebar around task-oriented hubs, introduced cross-functional landing pages, and surfaced related actions contextually inside workflows. This improved feature discoverability and reduced navigation dead-ends, but created growing pressure on the hub pages as more teams competed for primary placement within the navigation surface.

In 2036:

Users supervising multiple agentic workstreams struggled to distinguish between fundamentally different kinds of progress. In exploratory work, progress often appeared as expanding ambiguity. In procedural or evaluative work, ambiguity was usually a warning signal indicating drift or elevated risk. Generic activity states like “generating” or “reviewing” flattened semantically distinct kinds of work into shallow operational representations, instead of meaningful signals of progress.

So we introduced a new status terminology to distinguish between concepts at the idiom layer, allowing the system to represent progress differently depending on the form of work being performed. This improved situational awareness and attention allocation across distributed workstreams, but introduced growing governance challenges as domains demanded increasingly specialised operational vocabularies that we’ll need to maintain.

In 2026:

Users were ignoring notifications because collaboration requests, warnings, confirmations, and passive updates shared similar interruption patterns and visual weight. So we introduced tiered notification treatments across banners, badges, inbox queues, and inline prompts based on urgency and required action. This improved responsiveness to critical events and reduced alert blindness, but increased competition between product teams over which interactions deserved interruptive treatment.

In 2036:

Users managing multiple autonomous workstreams struggled to maintain focus because low-confidence handoffs, routine approvals, and catastrophic failure risks were surfaced through a single alerting panel. So we redesigned alerts around operational consequence rather than event type, separating low-impact requests and recoverable deviations from policy conflicts and high-blast-radius escalations into distinct supervisory surfaces. This improved attention triage and reduced operational overload for users, but made it harder for product teams to predict which combinations of confidence, reversibility, and consequence would cause activity to be presented to users as dangerous versus routine.

In 2026:

Users had difficulty learning advanced functionality because the product exposed too many controls upfront, making the interface feel overwhelming and unpredictable. So we introduced progressive disclosure patterns that surfaced complexity gradually based on user behaviour and task context. This improved approachability and reduced abandonment for new users, but created discoverability challenges for expert users who struggled to build complete mental models of the system’s capabilities.

In 2036:

Users struggled to understand the operational capabilities and limits of agents because delegation boundaries, authority scopes, and escalation conditions were configured via long-form natural language instructions — making them difficult to review, and inconsistently structured across teams. So we introduced explicit delegation controls with UI elements to make capabilities opt-in, confidence tolerances a sliding scale, and governance policies a shared catalogue of short-form atomic natural language snippets, each configurable at the agent, team, and platform level. This improved constraint legibility, delegation trust, and reduced inappropriate agent actions, but increased setup complexity and encouraged teams to over-specify operational behaviour in ways that occasionally lead to unintended policy clashes.

It is still design. It is recognisably the same activity — making cognition legible to people, making decisions navigable, making the work of an organisation hang together. It is also unmistakably broader than the product-UI version that has dominated the last twenty years. The interface era was the narrow chapter. The chapter that comes next is closer to organisation design, with semantics and ontology and governance as load-bearing primitives, and visual UI as one projection of a deeper layer.

The high-leverage designer of 2036 looks more like part systems architect, part organisational designer, part governance strategist, part cognitive ergonomist, with visual UI as one instrument among many. The Figma file becomes a downstream implementation artefact. The upstream artefact is the vocabulary the work is emitted in.

This is not a comfortable shift, and it should not pretend to be. A lot of what makes a UI designer good at UI does not transfer cleanly. New muscles need to be developed — systems thinking, operational thinking, organisational theory, ontology design, evaluation design, safety and risk systems. The discipline will look more like the work psychology and behavioural economics did when they joined the product era: an older intellectual tradition rediscovering itself in a new domain. There is a lineage. Industrial design shaped physical affordances. HCI shaped interactions. Behavioural design shaped decision environments. Agentic systems will shape semantic-operational environments. Each era expanded design’s surface area in response to where complexity moved next. This is just the next move in that sequence.

Which is to say: it is design. It is still design. It is design after the era in which the dominant interface metaphor was a window onto a database.

What Would Actually Help My Friend

So what would actually help? Not another pane of glass.

Not a command centre rendered over a stream of wrench icons and token counts. Not a more beautiful transcript of the same operational fog. We are already very good at recording what agents did. Tool called. File edited. Retrieval completed. Token streamed. The traces are rich with execution and thin on meaning.

The gap is not visibility. It is legibility.

Today’s runtimes can tell you the order in which work happened, but struggle to tell you what kind of work is happening at all. They expose operations more fluently than intentions; motion more clearly than significance. They can narrate the mechanics of a process while remaining strangely silent about its posture, its risk, its reversibility, its uncertainty, its organisational weight. The exhaustion comes not from opacity, but from having to continuously reconstruct meaning from activity.

And so the field reaches instinctively for interface. More dashboards. More traces. More panes. More visual grammar layered over semantically thin wires. But Ashby’s Law cuts deeper than that. A system responsible for coordinating complex work must possess enough representational variety to describe the work in the first place. If the wire can only emit operations, the interface can only rearrange operations. No amount of visual sophistication can conjure meaning that never travelled through the system.

What is missing is a semantic layer. A shared operational vocabulary for representing work itself. The next generation of agent systems will not be defined by richer interfaces for displaying activity. They will be defined by richer semantics for expressing responsibility, coordination, uncertainty, and organisational context across the wire.

Right now, the vocabulary is too small for the world we are asking these systems to manage.

My friend will probably be fine. She is the kind of user every taxonomy ends up being built around, and she has the patience to invent her own when the field does not provide one. The people we should be designing for are the people who will never write their own MCP or skills, who do not happen to do work that fits a productised mould, and who would still like, very much, to manage a team of three without losing their mind. The vocabulary is the gift we owe them.

It is worth saying out loud that design has done this kind of widening before. Industrial designers absorbed ergonomics and human factors to solve the problem of physical work. HCI absorbed cognitive science to solve the problem of interaction. Behavioural designers absorbed economics and decision research to solve the problem of choice.

Each era’s complexity asked design to learn a new language and grow a new muscle, and each time the field came out larger and stranger than it had been before. The problem of this era is work itself — distributed, semi-autonomous, semantically uneven, increasingly invisible to the people responsible for it. The languages this era will ask us to absorb are the ones that have been working on legible work the longest. Organisation design. Cybernetics. Management theory. The reading list has been waiting.

That is the responsibility hiding underneath the current excitement about agent interfaces.

The interface is not the beginning of the agentic design problem. It is the answer to a question: what is work made of?